The one-two punch that will energize the future of data exchange

Ashley Mulligan

Head of Growth Product & Developer Experience in Engineering

Share to

Let’s face it: big data is a living beast with some serious boundary issues. Economic figures may ebb and flow, but the volume of information being added to the global datasphere remains at a constant upward trend. As it continues to accumulate in mass, we have to get creative with how we store, process, and share it. If we don’t, before long we might be looking at a future that is reminiscent of a scene from the 1958 Sci-Fi classic “The Blob.” Except . . . you know . . . with data.

A report on the future of data sharing by Boston Consulting Group (BCG) envisions a scenario where data is primarily stored not in proprietary systems controlled by disparate private entities, but in a federated cloud-based system, to be accessed as needed across unlimited vertical industries and use cases. This vision of federated data offers the possibility for faster access and greater availability. But it also comes with its own bag of headaches. How do we ensure that this data is secure and how do we provide approved access to it? Assuring that your data exchange solution meets all applicable security standards is essential if you are going to collaborate with other businesses.

AI leads the way

There is no denying that the big data management situation is only getting more complex and that what is needed now is a way to simplify, expedite, and secure the data exchange process. This is not the time for isolated systems that meet the needs of a single customer, a single use case, but for a universal data management platform, an agnostic global solution to the big data problem that can identify, access, reformat, and securely transfer data from one application to another. It is a solution that will rely heavily on artificial intelligence (AI) to process the data quickly and machine learning (ML) to adapt to new scenarios going forward.

AI uses algorithms to make decisions based on the data it encounters. An AI-equipped data exchange platform is going to understand how to process, manage, format, and share it.

ML is a subset of AI that describes the ability to learn new ways of processing data based on previous AI encounters and on-the-fly decisions made with human assistance. This “human in the loop” scenario is an important aspect of machine learning that enables optimization of the system based on new rules and knowledge.

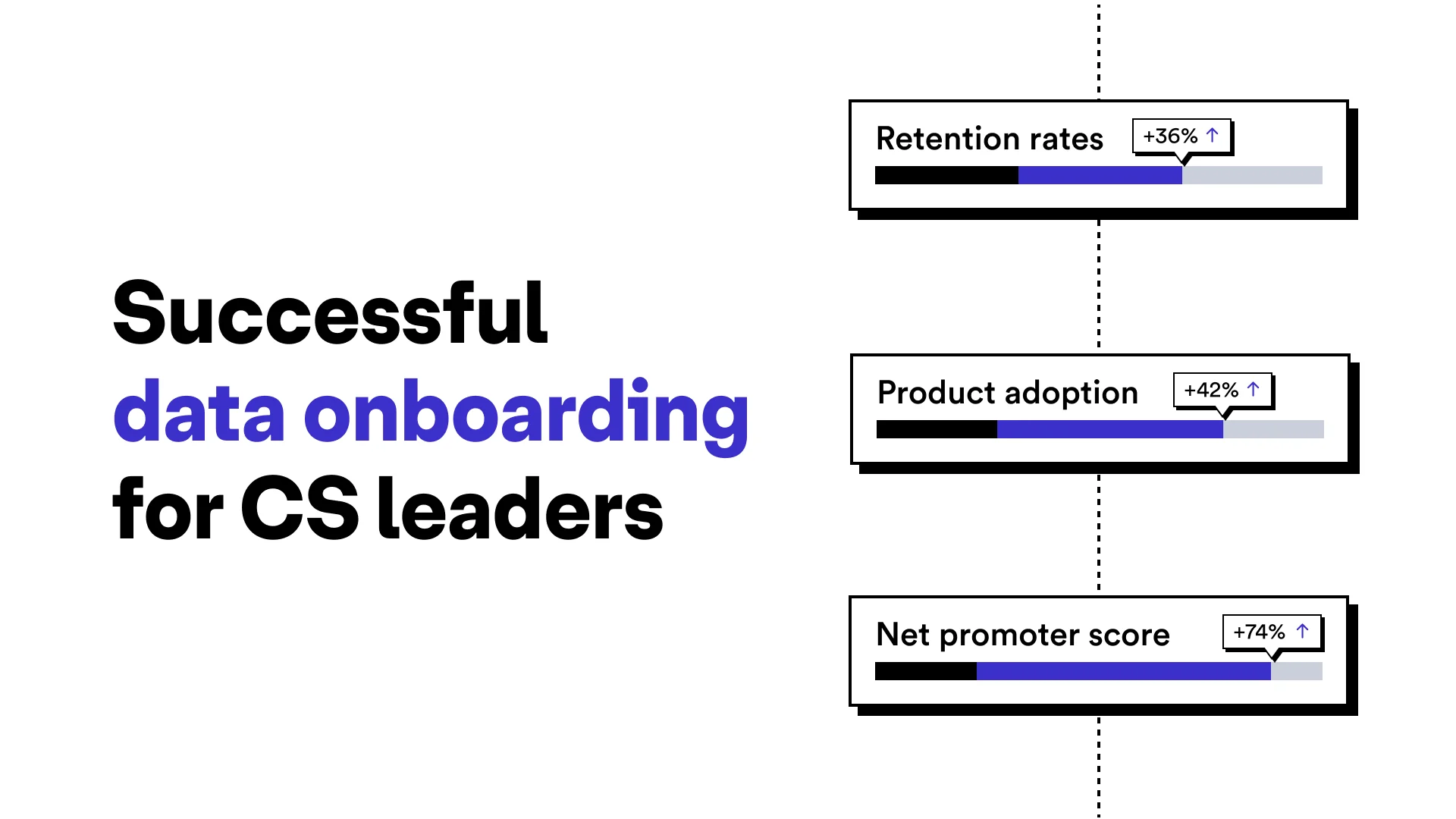

Together, AI and ML provide the one-two punch that will energize the future of data exchange and data sharing.

A bright, not-so-distant future

A recent article by published in the Journal of American Medical Informatics Association touts a study of the potential of federated learning — a technique where multiple parties collaboratively train AI models using shared, disparate data sets — and suggests that federated learning models can have benefits over traditional methods, including enhanced image and text analysis and decreased time-to-market. This study, while narrow in its practical scope, has implications for the greater business world, looking forward to a time when broader distribution of data access will lead to more thorough, comprehensive analysis. Provided the industry sets a strong foundation for security, such a framework could usher in exponential growth in data processing and analysis.

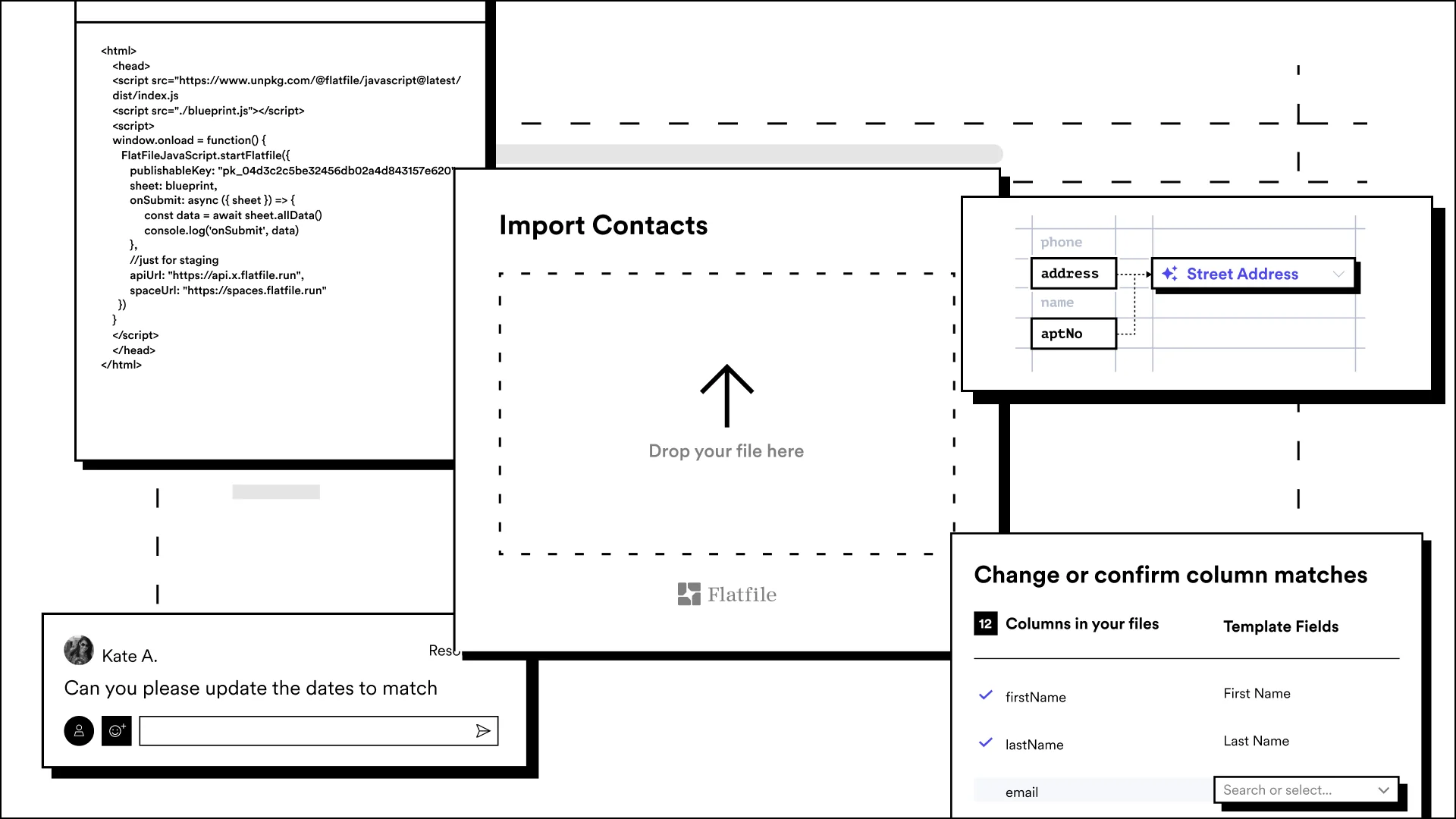

In the short term, however, far too many businesses are still stuck in the dark ages of downloading comma separated values (.csv) files, scrubbing them by hand, and uploading them to Internet of Things (IoT) and software as a service (SaaS) platforms to keep our ever-data-obsessed world running.

That’s the data exchange equivalent of hitting your space bar twice after each sentence.

Or keeping your data files on a 3.5 inch floppy disk.

Or worse yet, trying to outrun The Blob.

Goodness gracious, you can get by without Flatfile. But why even try?